Enhanced Collaborative Filtering with Reinforcement Learning

Research Motivation

Disadvantages of RBM based recommendation systems

-

Inputs do not take into account the time relationship of user ratings

→ Doesn’t reflect the user’s change in taste -

Must train on the whole data to account for new data

→ Cannot update the system fast enough to the incoming data -

Implicit feedback data is not considered

→ Cannot utilize data that can be more meaningful than explicit rating data

Suggested Model

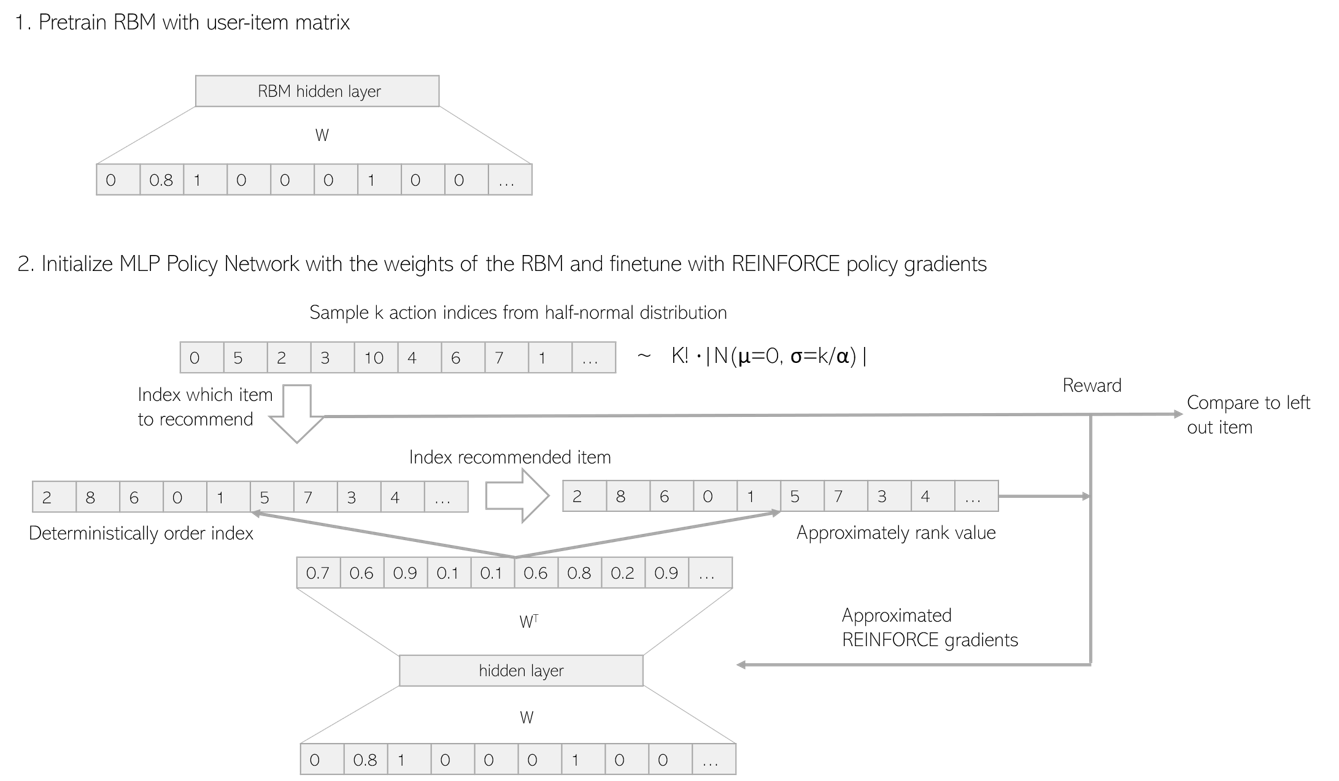

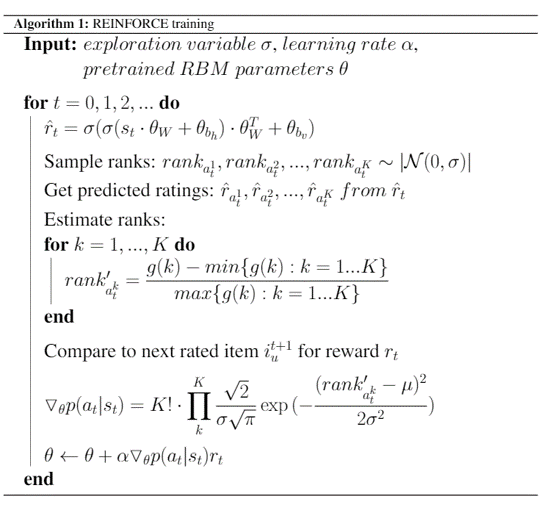

Enhanced Collaborative Filtering with Reinforcement Learning

- Obtain score r̂ for each item from the output of RBM

- Extract K rank values from Half normal distribution

- Select items ranked equivalently to the extracted rank values and produce approporiate rewards by comparing to the left-out items

- Calculate ranks based on r̂ approximately and apply it to the Half normal distribution to obtain the policy gradients to train the RBM’s parameters

Experimentation Data

Used MovieLens dataset to perform recommendation system evaluation

- Movie rating data from the MovieLens website

- Used frequently to evaluate recommendation systems

- Selected MovieLens100K, which has 100,000 rating data and MovieLens1M, which has 1,000,000 rating data

| Users | Items | Ratings | Rating density | |

|---|---|---|---|---|

| MovieLens100K | 1,000 | 1,700 | 100,000 | 5.88% |

| MovieLens1M | 6,000 | 4,000 | 1,000,000 | 4.17% |

Comparison to Original RBM

MovieLens100K

| RLRBM | RBM | |

|---|---|---|

| HR@10 | 0.1481 | 0.1217 |

| HR@25 | 0.2169 | 0.2169 |

| ARHR | 0.05986 | 0.05152 |

MovieLens1M

| RLRBM | RBM | |

|---|---|---|

| HR@10 | 0.09272 | 0.08940 |

| HR@25 | 0.1813 | 0.1763 |

| ARHR | 0.04423 | 0.04353 |

- For MovieLens100K with fewer items and users and higher rating density, we could see an outstanding improvement in recommendation performance

- But for MovieLens1M with lower rating density, we couldn’t see much change in the recommendation performance

Comparison to Supervised Learning Approach for Additional Training to the Left-out Data

MovieLens100K

| RLRBM | SUPERVISED | |

|---|---|---|

| HR@10 | 0.1481 | 0.1217 |

| HR@25 | 0.2169 | 0.2275 |

| ARHR | 0.05986 | 0.05036 |

MovieLens1M

| RLRBM | SUPERVISED | |

|---|---|---|

| HR@10 | 0.09271 | 0.08858 |

| HR@25 | 0.1813 | 0.1738 |

| ARHR | 0.04423 | 0.04557 |

- Comparing two different training methods for the same left-out items, we found that the performance of Reinforcement Learning is greater than Supervised Learning

- It seems that whereas Supervised Learning can only train to prefer one specific item to a user’s state, Reinforcement Learning can probabilistically distribute rewards across a user’s state

Comparison of Different Values of K

- For a smaller dataset, a smaller value of K has better recommendation performace

- For a bigger dataset, a bigger values of K has better recommendation performance

- For data with larger number of items and users, it will be more effective to user bigger value of K to obtain more user feedback during Reinforcement Learning training

Conclusion

Conclusion

- Designed a recommendation system that can reflect user feedback using Reinforcement Learning

- Through various experiments proved that additional recommendation model training with Reinforcement Learning can improve recommendation performance

- Using a pretrained Collaborative Filtering model, can learn more deeply about the correlation between users and items

- Through the proposed learning method, User’s change in taste can be learned in real-time

Future Work

- Use of implicit feedback data such as clicks and setting the approporiate rewards for those actions will result in better recommendation performance

- Applying the method to a real-world system so that the model can gather feedback on-line is the most advisable method of evaluating/training the proposed system